Yesterday I canceled my ChatGPT account

Well, it was actually a week ago, as I wanted to research this article in detail.

It all started with a video by Rutger Bregman on LinkedIn. He drew my attention to something I had been aware of in passing for some time – but hadn’t had room for in the turmoil between work and family: Greg Brockman, co-founder and president of OpenAI – the company behind ChatGPT – donated 25 million dollars together with his wife to “MAGA Inc.”, which is close to Trump. The largest single donation of the entire election cycle. And that’s not all: Since January 2026, the US immigration authority ICE has been using OpenAI’s GPT-4 to screen applicants for its recruitment wave – the authority responsible for Trump’s mass deportations of and violence against migrants.

That was the moment when I decided to actively engage not only with the use of AI, but also with its impact.

The most sustainable AI? Maybe none at all?

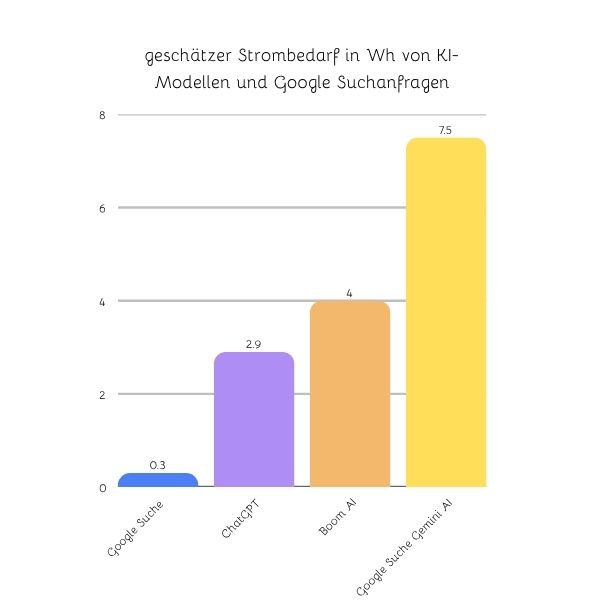

First of all, I clarified what I already knew: I have known for some time that AI is one of the most resource-hungry technologies of our time and that the operation of data centers often takes place in countries with already critical infrastructure. Only that AI consumes massively more energy than other online services. While a search query without AI consumes 0.8 Wh, a Gemini prompt consumes an average of 7.5 Wh. And then there’s the water consumption for cooling the servers – in areas where water is already scarce, there can be a trade-off between the data center and the population.

In view of the fact that our energy is limited, the most honest answer to the question “Which AI should I choose for sustainable use?” would be: none.

But just as someone who lives with children on a mountain without public transport should theoretically not use a car, in practice it is often only feasible with massive reductions in quality of life. When building my website, my programs, writing texts and structuring complex content, I would lose so much time without AI that it would feel like I was typing a proposal on a typewriter.

All right then. If I’m going to use them – then at least the alternative with the fewest side effects.

The systemic view: What really triggers every AI request

As someone who not only preaches systemic thinking, but also practices it, I can’t stop here.

Every single AI request has an impact on various other areas of our companies:

1. energy & water. Every request consumes electricity and water to cool the server. This affects local water cycles, not just in the abstract, but in very concrete terms for local people.

2. money & political power. Every subscription brings money into an operator’s coffers – which flows not only into product development, but also into lobbying, political networks and donations.

3. data & monitoring. Every request reveals a little more about you and the world to AI – and therefore presumably also to US intelligence agencies. A law applies to all American providers: the CLOUD Act, which allows US authorities to access data from US companies, regardless of the server on which it is stored.

4. impact on the psyche of the individual and thus on social coexistence. Because every interaction with AI changes us. Especially when AI is programmed to build relationships with people.

5. change in the economy, in particular the labor market and the distribution of overall economic value added. It is already apparent that there has been a 16% decline in AI-related entry-level jobs(see study). Value creation, which was previously widely distributed across the labor market, is increasingly flowing towards a few corporations instead of a large workforce, which will lead to poverty and changes in purchasing power.

These and probably many other levels cannot be resolved by closing your eyes. If you don’t ask whose infrastructure you are using, whose values have been incorporated into the models and where your subscription dollars are going, you are part of the system that you actually want to change.

And yet, withdrawing from digital visibility will not solve the problem either. The social effects will still become apparent. What’s more, when conscious, reflective people withdraw from the online space, they leave the field to those who go all out without reflection.

We could ask a lot of questions in the search for a responsible approach to AI.

There are questions that we can ask ourselves as a society, such as:

- Do we have a social strategy that cushions the shifts in the labor market?

- Do we want our children to develop an emotional relationship with an AI? If not, how do we commit to ensuring that such things are not developed in the name of profit?

- How can we finance research and development in such a way that organizations working for the common good have a chance?

We as a society should pay urgent attention to these aspects. Because the further development of AI in the private sector is progressing: whether we advocate ethical and fair regulatory frameworks or not.

Which AI is best to use?

For me, however, the question still remained: which AI is best to use? Derived from the principles of regenerative organizations, the following questions were interesting for me in the next step:

- What political entanglements does the company have behind the software and what money flows to whom?

- How safe is AI?

- How is my data handled there? Who has access to my profile?

Let’s get to the bottom of these questions:

Is there a politically independent AI?

My initial research into the major providers was sobering:

Behind every major AI provider (ChatGPT, Claude, Gemini) are the big tech companies from Silicon Valley – all of them engage in massive lobbying or are politically entangled. Some are close to the Democrats, others to the Republicans. Mistral does it the European way: in Brussels and Paris.

Money flows to political parties as support and it is mentioned positively when a provider such as Anthropic (Claude) refuses a government contract because it would have had to disclose user data.

So I did some more searching and identified two smaller providers:

Lumo from the company Proton. The company Proton, based in Switzerland, is owned by a non-profit foundation and specializes in online services with secure privacy.

Confer from the founders of Signal: Signal is a messenger service that relies on end-to-end encryption and data protection. It is therefore often used by institutions such as kindergartens and schools where a high level of data protection needs to be ensured.

The big difference: profit logic vs. the common good

If we compare the security and ethical standards of the big players (OpenAI, Google, Anthropic) with the new, public interest-oriented alternatives, the systemic difference becomes clear. With the Silicon Valley giants, “security” is often a marketing tool to overcome regulatory hurdles, while the business model is based on data exploitation and growth pressure.

- OpenAI (ChatGPT) and Google (Gemini) operate as (or have been transformed into) for-profit companies. Their research to ensure that AI shares human values often conflicts with the pressure to maximize user engagement and develop new markets. The political entanglement – from donations to both camps to contracts for government surveillance – shows that their ‘neutrality’ is an illusion.

- Anthropic (Claude) is often perceived as more ethical, as they explicitly propagate “Constitutional AI” and reject government data access, but here too, capital flows from tech billionaires (such as Jeff Bezos), which does not rule out long-term conflicts of interest.

The paradigm shift at Proton (Lumo) and Signal (Confer): Have no focus on profit maximization.

- Proton is structurally immunized against takeover and greed for profit by the Proton Foundation. The AI (Lumo) is not a means to sell your data, but a tool that is at the service of your privacy. There is no need to sell your attention through “clickbaiting” or manipulative conversation.

- Signal (with its AI tool Confer) builds on the same foundation: open source, no advertising, no data collection.

Due to the common good orientation and the high value of privacy of both organizations, there are already structurally fewer incentives to get involved in opaque deals with political rulers or financial arrangements. On the contrary, Proton drew attention to itself when it brought charges against Apple’s app store policy because it enables censorship.

This initial analysis already reveals two different camps. The big players with deep political entanglements and the two “smaller” providers for whom political impartiality is part of their mission.

However, the fact that a company has no political involvement does not mean that my data is under my data sovereignty. “But I have nothing to hide,” you might be thinking. Perhaps, but data has become so important that experts such as Bernard Lietaer see a change of heart here as one of three key paradigm shifts towards a sustainable future.

Lietaer’s argument: without data sovereignty, we reproduce the same power structures in the digital space that we criticize in the monetary and economic system. Central platforms are becoming the new “banks” – they control, evaluate and monetize our digital traces.

For AI, this means that an AI that is truly oriented towards the common good must not only be politically independent, but also sovereign in terms of data.

So let’s move on to the next question:

How is my data handled by the AI providers?

Data sovereignty: who owns your digital self?

The question of data protection with AI is not just technical, but existential. Every input into a chatbot is a piece of your intelligence, your creativity and often also your vulnerability.

The model of the big players: With the established US providers, your input is often the raw material for the next model update. Even if they “anonymize”, there is still a risk that sensitive patterns will be extracted. The US CLOUD Act allows authorities to access data stored on US servers – no matter where you are located. Your data is therefore potentially part of a geopolitical power play.

In addition these models train with your inputto improve their products for everyone else. What sounds good at first can also backfire. If a lot of patriarchal information is uploaded, the next update will also be more patriarchal overall. This can reinforce questionable tendencies.

In addition, the release of data to companies also opens the door to potential manipulation. Microtargeting – i.e. the targeted display of selective information based on what a software provider knows about you, always limits the range of vision we have. A recent study by MIT estimates the potential to be lower than expected. However, this study focused on political advertising and not the more complex dynamics of AI chats. Systemic perspectives require different perspectives on a topic and not the alghorhythmic amplification of information from one’s own bubble.

The Lumo & Signal model: Here, too, there is a clearly different direction:

- End-to-end encryption: where even the provider cannot read your chats. Proton and Confer are pioneers of end-to-end encryption. This means that your entries are transmitted and processed in encrypted form. Even the operators cannot see into your chats. There is no back door for advertisers or secret services.

- No training with user data: With Lumo and Signal (Confer), your personal conversations are not used to train the model for others. Your intelligence remains yours.

- Legal protection: As a Swiss company with strict data protection guidelines, Proton is subject to a different legal framework than the Silicon Valley companies. They are structurally designed to resist data leakage rather than promote it. Confer is technically strong, but its US headquarters is a legal disadvantage compared to Switzerland.

This is the core of digital sovereignty: not just knowing what AI says, but knowing who hears it and who ownsit. If we as changemakers want to regenerate the world, we must also gain sovereignty over our digital data.

These two aspects: political independence and data sovereignty for us users are an important foundation for regenerative AI. As with any technology, it is now also important to ensure that the technology is safe: safe for each individual user and safe in the context of the social changes that result from it.

How safe is AI?

What does it actually mean when an AI is safe? What values should be built in so that it remains a technology oriented towards the common good?

The last question would deserve its own

Leading AI security experts set the following standards here, among others:

- Built-in principles that prevent the AI from instructing self-harm or creating deepfakes and pornographic content.

- scripts that prevent the AI from appearing humanized. If chatbots act too flatteringly, or even have the declared goal of replacing human caregivers, this has massive psychological and social consequences (discussed in depth in the dialog between Nate Hagens and Tristan Harris). In addition, they often flatteringly feign expertise and allow thoughtless users to follow paths and directions with unqualified feedback that an expert would have advised against.

- Whether companies are actively researching and taking measures to counteract collective undesirable side effects. Side effects include, for example, mass unemployment, the resulting loss of income or the primary emotional attachment of children to bots and the resulting alienation and destruction of human relationships.

- Whether an AI presents answers to questions in such a way that the human interlocutor receives a balanced diversity of opinions or whether certain ideologies are presented preferentially.

- That AI shouldn’t do clickbaiting: in other words, it shouldn’t manipulate us into doing more than necessary by always ending with“Do you want me to show you this too …. Would you like another graphical representation of cohesion?“.

Practical tip for you

Prompt for AI hygiene

Would you like the AI to leave out tricks like clickbait and carry out balanced research? You can store a prompt with general impulses in the personal settings of most chatbots. Get your free template now.

At the Future of Life Institute , experts have developed a methodology that evaluates the largest AI providers according to a sophisticated safety index, which partially covers the requirements listed above and adds further aspects. Claude from Anthropic comes out on top in the comparison. At the same time, however, with a C+ it is still a long way from being safe.

The systemic difference: security as an end or a means?

With the big players, security is often just compliance. A system always develops in the direction of its purpose. Looking at the public statements made by the CEOs and owners of the major providers, the question arises as to whether they really care about security and the long-term impact on humanity – or whether they are just a means to an end of growth.

Sam Altman, CEO of OpenAI, recently put the debate about the energy consumption of AI into perspective by referring to the human effort involved:

“People talk about how much energy it takes to train an AI model – but it also takes a lot of energy to train a human. It takes about 20 years of life – and all the food you consume in that time – before you get smart.”

Sam Altman told the Indian Express

Even more worrying is the attitude of Peter Thiel, co-founder of Palantir – one of the central data collection points that aggregates data from various AI providers, social media and other sources. In an interview, he failed to answer the simple question “Should humanity survive?” with a clear “yes” for 17 seconds.

I’m no longer so sure whether any test values with a “C+” are really worth a damn. An AI whose founders cannot take a clear position on the common good and the survival of humanity probably lacks the necessary incentive to design its product in such a way that it is truly safe for users and the general population.

The paradigm shift at Lumo and Confer

Lumo and Confer do not appear in the major security reports. There is currently no validation by third parties. This is not only because these smaller providers are not as much in the sights of NGOs and researchers as the big tech companies. They also have less incentive to be tested – because their business model is not based on selling data. The focus on the common good in their statutes (Proton Foundation, Signal Foundation) creates a structural protection that is often lacking in profit-oriented companies.

Confer also relies on this approach: the system has configurable filters for hate speech, self-harm and illegal content as well as automatic checks for false or invented answers. The special feature: this moderation takes place within the encrypted environment. This means that the AI can block harmful content without the operator ever seeing the plain text of your conversation.

Why does that count for my decision?

If we think the discussion about AI security, data sovereignty and the ethical foundations of developers through to the end, we come up against a fundamental question: do we want a technology that augments and empowers humans – or one that replaces, manipulates or even views them as a mere data point in a larger algorithm?

The danger of uncontrolled transhumanism, in which the boundaries between man and machine become blurred without us retaining control over our own identity, is real. It arises where the logic of profit and technological progress proceed without an ethical compass.

This is where my decision in favor of Lumo becomes not just a technical preference, but an attitude. I’m not looking for an AI that wants to “improve” me by evaluating my data and controlling my behavior. I am looking for a tool that helps me to develop my human intelligence – without sacrificing my sovereignty, my privacy and my right to unbiased thinking.

After all the research and the unanswered questions, there is only one logical consequence for me:

My conclusion: I’ll try Lumo

After trying out Lumo for a few days, I took the following three steps:

- I canceled my subscription to ChatGPT and deleted the app from my phone. I’m keeping my online access so that I can continue to access a few bots programmed for ChatGPT.

- I officially announced my boycott on QuitGPT .

- I have taken out an annual subscription with Lumo .

I didn’t use the graphics function on ChatGPT much anyway and when I tried to generate a PDF a few times, it was unusable. Everything else works quite well on Lumo. In addition, Lumo is based in Europe and therefore has a decisive advantage over Signal for me: I have currently found the best compromise for me:

Still not sustainable. Still not regenerative. But the place where I am currently doing the least damage – in full awareness of this.

What step are you taking now that you are better informed?

Practical tip for you

Prompt for AI hygiene

Would you like the AI to leave out tricks like clickbait and carry out balanced research? You can store a prompt with general impulses in the personal settings of most chatbots. Get your free template now.